I remember sitting in a dimly lit studio three years ago, staring at a VR headset and feeling nothing but pure, unadulterated motion sickness. The visuals were technically “perfect,” yet my brain was screaming that something was fundamentally wrong with the way the space was being rendered. Everyone in the room was busy geeking out over polygon counts and lighting shaders, but they were completely ignoring the actual magic of perceptual depth mapping. We spend so much time obsessing over raw data and resolution that we forget the most important part: how the human eye actually interprets distance and scale to make a digital world feel lived-in rather than just observed.

I’m not here to feed you a textbook definition or a list of expensive hardware requirements that won’t actually fix your immersion issues. Instead, I want to pull back the curtain on how you can use perceptual depth mapping to bridge that uncanny gap between “looking at a screen” and actually being there. I promise to skip the academic fluff and give you the straight-up, battle-tested insights I’ve picked up from years of failing, iterating, and finally getting it right.

Table of Contents

Neuromorphic Visual Perception and the Brains Logic

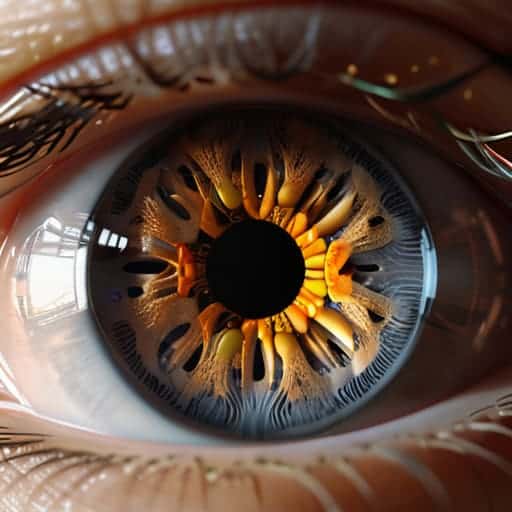

To understand how we build better digital spaces, we have to look at how our biology actually processes light and shadow. Our brains aren’t just cameras; they are prediction engines. When we engage in neuromorphic visual perception, we aren’t just seeing pixels on a flat screen; we are subconsciously calculating the distance between objects based on how they move and overlap. This isn’t just a biological quirk—it’s the foundation of how we interpret reality.

In the digital realm, we mimic this biological logic through specific spatial cues. Instead of just stacking elements on top of one another, designers use occlusion and layering techniques to signal that one object is physically “closer” to the user than another. By subtly manipulating shadows or slight scale shifts, we trick the brain into accepting a 2D surface as a 3D playground. When these cues align with our evolutionary expectations, the interface stops feeling like a piece of glass and starts feeling like a tangible environment we can actually inhabit.

Harnessing Depth Cues in Interface Design

Once we understand how the biological brain interprets space, the next logical step is translating those biological shortcuts into something a user can actually touch and feel. In the digital realm, we aren’t dealing with physical light hitting a retina, but we can mimic those signals through clever occlusion and layering techniques. By allowing one element to partially overlap another, we provide the brain with an instant, subconscious signal about what sits “on top.” This isn’t just about aesthetics; it’s about reducing the cognitive load required to navigate a screen.

If you’re looking to bridge the gap between these abstract neurological theories and actual, boots-on-the-ground application, I’ve found that stepping away from the screen is often the best way to recalibrate your own spatial intuition. Sometimes, the most effective way to understand how we navigate complex environments is to simply immerse yourself in the raw, unscripted energy of a real-world setting. For instance, if you find yourself needing a change of pace to spark some new perspective, checking out the local scene—much like exploring the vibrant connections found through sex in bristol—can provide that unexpected sensory input that textbooks just can’t replicate.

When we move beyond the flat, 2D grid, we start seeing the true power of z-axis implementation in UI. Instead of treating every button and window as if they exist on a single sheet of paper, we treat the interface as a volumetric space. This creates a sense of hierarchy that feels intuitive rather than forced. When a modal window pops up with a subtle drop shadow, it’s not just a design choice—it’s a way of leveraging depth perception in digital environments to tell the user, “Hey, pay attention to this specific layer right now.”

Pro-Tips for Mastering the Third Dimension

- Stop relying on shadows alone; use atmospheric perspective by subtly desaturating and blurring objects that sit further back in your digital space.

- Don’t overdo the occlusion—just because an object is “behind” something doesn’t mean it should be completely invisible; use slight edge highlights to maintain spatial awareness.

- Scale is your best friend, but use it sparingly; a tiny change in the relative size of elements can trick the eye into perceiving massive distance without a single line of code.

- Leverage motion parallax to ground your users; when elements move at different speeds during a scroll or transition, the brain instantly accepts the depth as “real.”

- Watch your lighting consistency like a hawk; if your light source shifts between layers, the entire illusion of depth collapses into a confusing, flat mess.

The Bottom Line: Why Depth Matters

Depth mapping isn’t just about math; it’s about mimicking the biological shortcuts our brains use to make sense of a 3D world.

Good interface design uses these natural cues to guide a user’s eye without them even realizing they’re being directed.

Moving beyond flat visuals means creating digital spaces that feel intuitive, grounded, and—most importantly—human.

The Illusion of Reality

“We aren’t just rendering pixels on a screen; we’re trying to trick the human brain into believing there’s a world behind the glass. If the depth mapping is off by even a fraction, the magic evaporates and all you’re left with is a flat, lifeless image.”

Writer

The Final Dimension

We’ve journeyed from the biological intricacies of how our neurons decode space to the practical, pixel-perfect applications of depth in modern UI. It is clear that perceptual depth mapping isn’t just a technical checkbox or a clever mathematical trick; it is the bridge between a sterile, two-dimensional screen and a truly immersive experience. By understanding how our brains leverage neuromorphic logic and subtle visual cues, we move past simply displaying information and start shaping how users actually feel within a digital environment. We aren’t just building interfaces anymore; we are constructing spatial realities.

As we look toward a future defined by spatial computing and augmented realities, the mastery of depth will separate the mediocre from the transcendent. The goal is no longer to make things look “good,” but to make them feel inevitable. When we get depth right, the technology seems to vanish, leaving only a seamless connection between human intent and digital response. So, as you move forward in your own design or engineering pursuits, don’t just aim for clarity—aim for presence. Build worlds that don’t just sit on a screen, but ones that invite us to step inside.

Frequently Asked Questions

How do we prevent "depth fatigue" when an interface uses too many layers or shadows?

The trick is to stop treating every element like it’s floating in a void. Depth fatigue happens when your eyes are constantly working to recalibrate distance. To fix this, use “depth hierarchy.” Reserve heavy shadows and multi-layered stacking for primary actions—like a modal or a floating button—and keep the background elements flat. If everything is shouting for attention through shadows, nothing stands out. Use subtle, consistent elevation to guide the eye, not overwhelm it.

Can perceptual depth mapping actually help reduce eye strain in long-term VR usage?

Absolutely. It’s one of the biggest hurdles in VR right now. The “vergence-accommodation conflict” is basically your eyes fighting themselves—they’re focusing on a screen inches away while trying to converge on a mountain miles away. By using perceptual depth mapping to align those cues, we can stop that constant tug-of-war. It makes the digital focal plane feel natural, which is the difference between a seamless session and a massive headache.

Where is the line between intuitive depth cues and distracting visual clutter?

The line is drawn at intentionality. Intuitive depth cues—like a subtle drop shadow or a slight blur on a background—act as silent guides, telling the brain what matters without demanding attention. They create hierarchy. Visual clutter happens when you overdo it, using too many competing layers or aggressive gradients that fight for the user’s focus. If the user has to stop and “figure out” the interface, you’ve crossed from depth into distraction.